Don’t Believe Everything You See Online

Not long ago, the internet had a simple rule for separating fact from fiction: “Pics or it didn’t happen.”

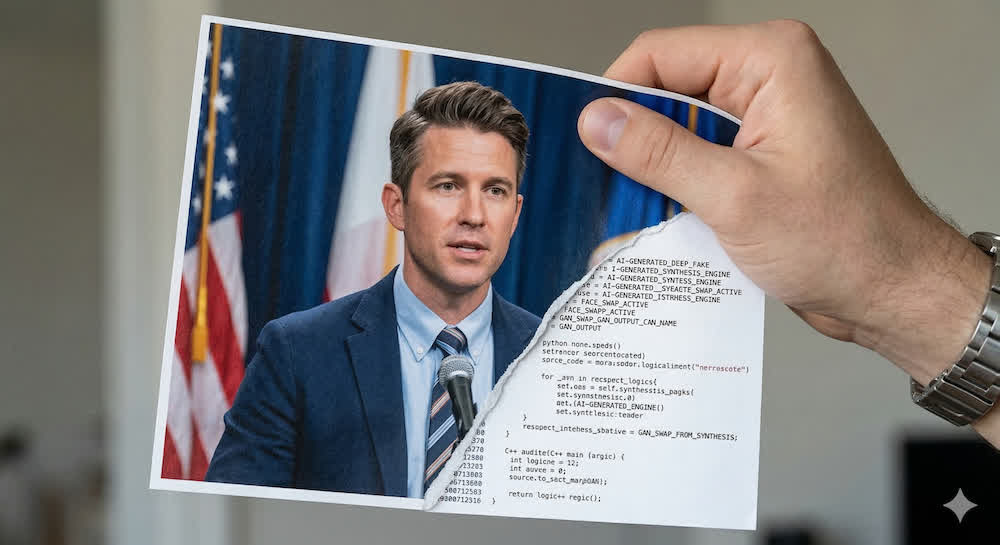

Those were simpler times. With the advent of AI image, video, and voice generators, today’s digital landscape is becoming harder by the day to determine what’s real, what’s completely fake, and what’s real but slightly modified.

In the days following the US and Israel’s joint military strike on Iran earlier this month, social media was flooded with images and videos supposedly documenting the conflict. Some were recycled from unrelated events. Some were AI-generated. And in at least one case, the “combat footage” being shared as breaking news was actually gameplay from War Thunder, a military-themed video game.

Craig Silverman, co-founder of the open-source intelligence platform Indicator, put it this way in a recent Verge article: “The average person needs to understand that the current information environment is tilted towards manipulation and deception. This requires you to scroll with an awareness of how easily images, video, and text can be manipulated.”

While our work may not be as serious as war, the stakes for business leaders are a lot higher than just getting fooled by a viral meme. Think about a deepfake of your CEO saying something they never said, or a voice clone that convinces your finance team to wire money to the wrong account. That last one isn’t hypothetical, either. An employee at the South China Morning Post sent $25 million after being fooled by a deepfake video call impersonating their CFO.

But there are people working to fight back: organizations like The New York Times, Bellingcat (who call themselves the home for online investigations), and Indicator (news site about “digital deception”) have started publishing their verification methods. The following are four tactics they recommend that can make us all just a little bit more protected the next time we scroll:

Step 1: Look Very Closely

AI still struggles with the physics of the real world. Check backgrounds carefully, including architecture, figures, signage, etc. When The NYT scrutinized unverified images of Nicolás Maduro after his US detention in January, one image gave itself away through aircraft windows that were the wrong size. Edges of people and objects can look subtly off in ways your eye will catch if you’re paying attention.

Step 2: Consider the Source

Spend 30 seconds on the account that shared something before you trust it. Researchers call this the “Account Age Paradox.” Typically, convincing deepfakes are recent, so the accounts pushing them were often created around the same time those AI tools launched. A new account with no posting history, suddenly sharing explosive content, is a red flag. Even verified accounts aren’t a guarantee (especially on X).

Step 3: Check the Digital Footprint

Reverse image search is one of the most powerful and underused tools any of us has. Images leave a footprint online, and they’re easy to look up. To try it out for yourself:

- Desktop: Open a browser window, then right-click on the image and search Google Images.

- Mobile App: Use the Google Lens feature

Bellingcat recently traced a post claiming to show missiles striking an Israeli nuclear facility back to Ukrainian ammunition depot footage from 2017. The original source is almost always out there if you look for it, unless it’s been recently generated by AI.

Step 4: Trust Your Gut, Then Verify It

If a piece of content makes you feel an immediate strong reaction like outrage, fear, or shock, treat that as a signal to slow down and verify that what you’re seeing is authentic. Often the #1 goal of fake content is to get us fired up, so if that’s what you’re feeling, it may be a sign that they’re succeeding. Silverman’s advice: awareness and patience don’t require tools or expertise, but they do require practice.

So What?

Here are two habits worth building to protect yourself:

- Personally: pause before you share anything that triggers a strong emotional reaction.

- Organizationally: have this conversation with your team. Your employees are gathering information, making decisions and sharing content on behalf of your brand every day, often without any framework for evaluating what’s real. Walking them through these steps could prevent the kind of reputational damage that takes years to undo.

Seeing is no longer believing, but a little skepticism goes a long way.